The Scalability Trilemma: Layer 1 and Layer 2 Solutions

September 24, 2021

6 min

Scalability, hand in hand with decentralisation, is the basic principle of blockchain technology. Despite being the slogan carried by all of DeFi, these are complex properties to achieve, without sacrificing the security that a blockchain must have.

Much more than an Hamletic Dilemma

The challenge that blockchain is facing is not new. Back in the 1980s, early computer scientists formulated the CAP theorem, according to which decentralised databases could only guarantee two of three properties at the same time: consistency, availability and partition tolerance (CAP).

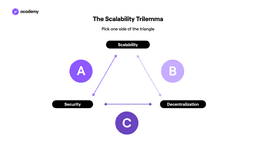

The child of this theorem is today’s blockchain trilemma, highlighted by Vitalik Buterin, according to which at least one property must be sacrificed between security, decentralisation or scalability.

For example: Bitcoin is secure and decentralised, but not scalable; Ripple is secure and scalable, but tends towards centralisation.

DeFi is not willing to give up any of the three properties, and is struggling to accommodate all three requirements, but the most challenging one is scalability. This is why it is often also called the Scalability Trilemma.

In fact, most of the protocols were not born for a market as large as the current one, and are experiencing great problems of cost and latency.

What kind of solutions are there?

Some industry projects are working on the base protocol, extending it where possible, or creating entirely new blockchains in which the trilemma is solved. These are called Layer 1 solutions.

Scalability on Layer 1

Working on aspects of the trilemma at Layer 1 level means working on the base protocol, i.e. the rules of the blockchain and its central structure.

Ethereum, like Bitcoin, needs to enhance scalability, and to do so it is applying two of the most common expedients:

- Changing consensus mechanism

- Sharding

On the basis of how the consensus mechanism is programmed, the level of decentralisation, scalability and security are affected. This is why there are so many consensus mechanisms: each blockchain tries its own variant to solve this trilemma.

The two main consensus mechanisms adopted are Proof-of-Work and Proof-of-Stake, which are based on two very different logics. The first is based on computational power and requires time-consuming and wasteful use of resources.

PoS, on the other hand, is based on putting tokens at stake to guarantee the reliability of validators: this creates a reward system that incentivises positive behaviour on the network, and does not require high energy consumption.

Switching from one declination of PoS to another is fairly straightforward, even though it affects many elements of an ecosystem.

It is nothing compared to switching from PoW to PoS as Ethereum is doing. This change affects tokenomics, it means moving from mining to staking, it means reviewing the very way in which transactions are validated, which is the building block that makes up every smart contract, every dapp.

Sharding, on the other hand, concerns the architecture of the blockchain. It is the fragmentation of the basic blockchain into multiple networks to distribute the load of data over different sets of validators. This complicates communication within the blockchain and makes it more intricate, albeit faster.

Scalability on Layer 2

Much more widespread, however, are projects that focus on Layer 2, i.e. developing protocols that rely on existing blockchains to improve them. This speeds up development and experimentation.

For example, the Lightning Network is a Layer 2 solution of Bitcoin, which is a Layer 1 blockchain.

There are 4 scaling models that are currently applied on Layer 2:

- State Channels

- Nested Blockchains

- Rollups

- Sidechains

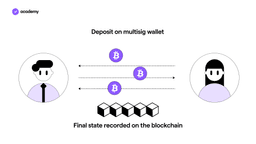

State Channels are two-way communication channels between participants in a network that allow them to interact off-chain. In this way, they can exchange data and transactions quickly without having to depend on the work of miners and validators.

This is possible thanks to a smart contract or multi-sig wallet that regulates the interaction and allows the start and end of the interaction to be recorded on the blockchain.

This is the model underpinning the Lightning Network and Ethereum’s Raiden Network, which will also allow smart contracts to be executed in these off-chain channels.

Nested blockchains are still very few and far between. OmiseGO, an Ethereum-based dapp, is working on a nested blockchain called Plasma.

Plasma is built this way:

- a base blockchain that dictates the rules of the system, without performing transactions

- a series of blockchain layers, each connected to the previous one in a parent-child chain structure.

Parent-chains delegate the execution of operations to child-chains, which report the result to the parents. This spreads the load of operations over several blockchains and speeds up execution.

Ethereum Rollups use a similar mechanism, in that they allow smart contracts to be executed off-chain, reporting the results back to the main blockchain.

Sidechains are blockchains adjacent to the main blockchain. The difference with nested blockchains is that they are less integrated into the core blockchain, in fact they can adopt:

- a consensus mechanism different from that of the mainchain to promote efficiency

- a specific utility token to facilitate data transfers between the sidechain and the mainchain.

An example of a sidechain is the Liquid Network, which is connected to the Bitcoin blockchain.

Polkadot has its own variation of the sidechain, which it calls the Parachain, and they are all linked to the mainchain, with which they share the security system.

Will the trilemma ever be resolved?

Most projects are working on it and only the future will tell which combination will win, and whether this decades-old trilemma will be dispelled.

The biggest obstacle at the moment is the complexity involved in the proposed models, but history teaches us that technology just needs time.